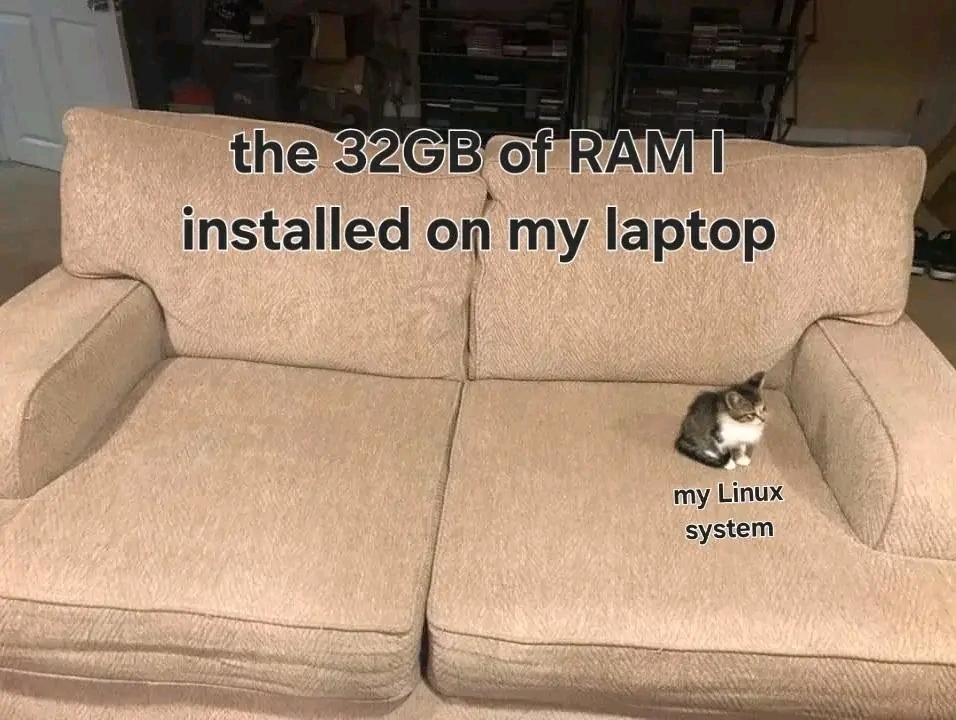

"Free" memory is actually usually used for cache. So instead of waiting to get data from the disk, the system can just read it directly from RAM after the first access. The more RAM you have, the more free space you'll have to use for cache. My machine often has over 20GB of RAM used as cache. You can see this with free -m. IIRC both Gnome and KDE's system managers also show that now.

Android studio: *big fat cat in the middle of the sofa"

Work gave me a 16gb laptop for Android development.

It took up to 20 minutes to incrementally compile.

They eventually bumped me up to 32gb when I complained enough that my swap file was 20gb.

Suddenly incremental compiles are <2 min

Kid named programs above OS-level:

I just took a Core i5, 6 GB RAM laptop from 2011 and reinstalled Linux Mint and put in a 1 TB SSD. The difference between that and Ubuntu 23.10 and a 750 GB 5400 RPM drive was like night and day.

That’s nice… if you only plan to run a bare operating system. Try processing some big-ass data files with R.

This just means you're future proofed

You haven't tried compiling unreal engine or clicking too often on subsurface subdivision in Blender. But yeah you don't need it for playing games.

32gb is just enough for a homelab

In a similar fashion I got my sons old netbook. It has 32GB flash as storage medium. 27GB were in use by Windows, Office, and Firefox. User file size was neglectable. Then it ran into problems because it wanted to download an 8GB update.

Now it runs Kubuntu, which uses about 4GB with LibreOffice and a load of other things.

I feel like recently developed games and apps expect the user to have a "moden" sized RAM, meaning that the decs don't give a crap about optimizing RAM-usage.

Transcoding an HDR blueray to h265 filled it up pretty quick and I'm about to start dabbling with game development/3d modeling.

I've also filled it up pretty quick learning how fast various data structures are in which situations. You don't really see a difference in speed until you get into the billions of items at least for python.

my install regularly balloons to 24gb...... it's probably zoom's fault, but still

Me on my 32GB ThinkPad that spends 99% of its time running only a browser and email client

It's great that the system is so efficient. But things do come up. I once worked with an LSP server that was so hungry that I had to upgrade from 32 to 64gb to stop the OOM crashes. (Tbf I only ran out of memory when running the LSP server and compiler at the same time - but hey, I have work to do!) But now since I'm working in a different area I'm just way over-RAMed.

me a hard KVM user need a lot of RAM

Reminds me of a comment I made a few days ago that some people thought was a joke but nope, I was being serious.

That that to the 3000 browser tabs I have open, two instances of VS code, the multithreaded python app I’m running and developing, the several-gigabytes large dataset that’s active in memory.

Some days, even 64 GB isn’t enough.

More is more.

Big dawg here running a supercomputer with 4 gigs of RAM. /s

Always see my system chilling at 5 or 6 gb

linuxmemes

I use Arch btw

Sister communities:

- LemmyMemes: Memes

- LemmyShitpost: Anything and everything goes.

- RISA: Star Trek memes and shitposts

Community rules

- Follow the site-wide rules and code of conduct

- Be civil

- Post Linux-related content

- No recent reposts

Please report posts and comments that break these rules!